Machine learning operations (MLOps) is a set of practices that combines machine learning, DevOps, and data engineering to automate and streamline the entire machine learning lifecycle. From model development and training to deployment and monitoring, MLOps ensures that ML models can be built, deployed, and maintained reliably at scale.

Think of MLOps as the bridge between experimental data science work and production-ready systems. While a data scientist might create a brilliant model on their laptop, MLOps provides the framework to deploy that model safely, monitor its performance, and update it when needed—all without breaking existing systems.

Why MLOps Matters

Traditional software development has established practices like version control, automated testing, and continuous integration. Machine learning projects, however, face unique challenges that standard DevOps practices don’t address:

- Data dependencies: Models depend on data quality and availability, which can change over time

- Model drift: Real-world data evolves, causing model performance to degrade

- Reproducibility: ML experiments involve multiple variables (data versions, hyperparameters, code) that must be tracked

- Compliance and governance: Models making business decisions need audit trails and explainability

Without proper MLOps practices, organisations often struggle with models that work in development but fail in production, or worse, models that silently degrade over time without anyone noticing.

Core Components of MLOps

Version Control and Experiment Tracking

MLOps extends traditional version control beyond code to include data versions, model artifacts, and experiment parameters. Tools like MLflow, Weights & Biases, or Neptune track every experiment, making it possible to reproduce results and compare model performance across different iterations.

Automated Testing

ML systems require different types of testing than traditional software. This includes data validation (checking for schema changes or data quality issues), model validation (ensuring performance meets thresholds), and integration testing (verifying the model works within the broader system).

Continuous Integration and Deployment (CI/CD)

MLOps adapts CI/CD practices for machine learning workflows. This might involve automatically retraining models when new data arrives, running validation tests before deployment, and gradually rolling out model updates to minimise risk.

Monitoring and Observability

Production ML systems need comprehensive monitoring that goes beyond traditional application metrics. This includes tracking model performance, data drift, feature distributions, and business metrics to ensure models continue performing as expected.

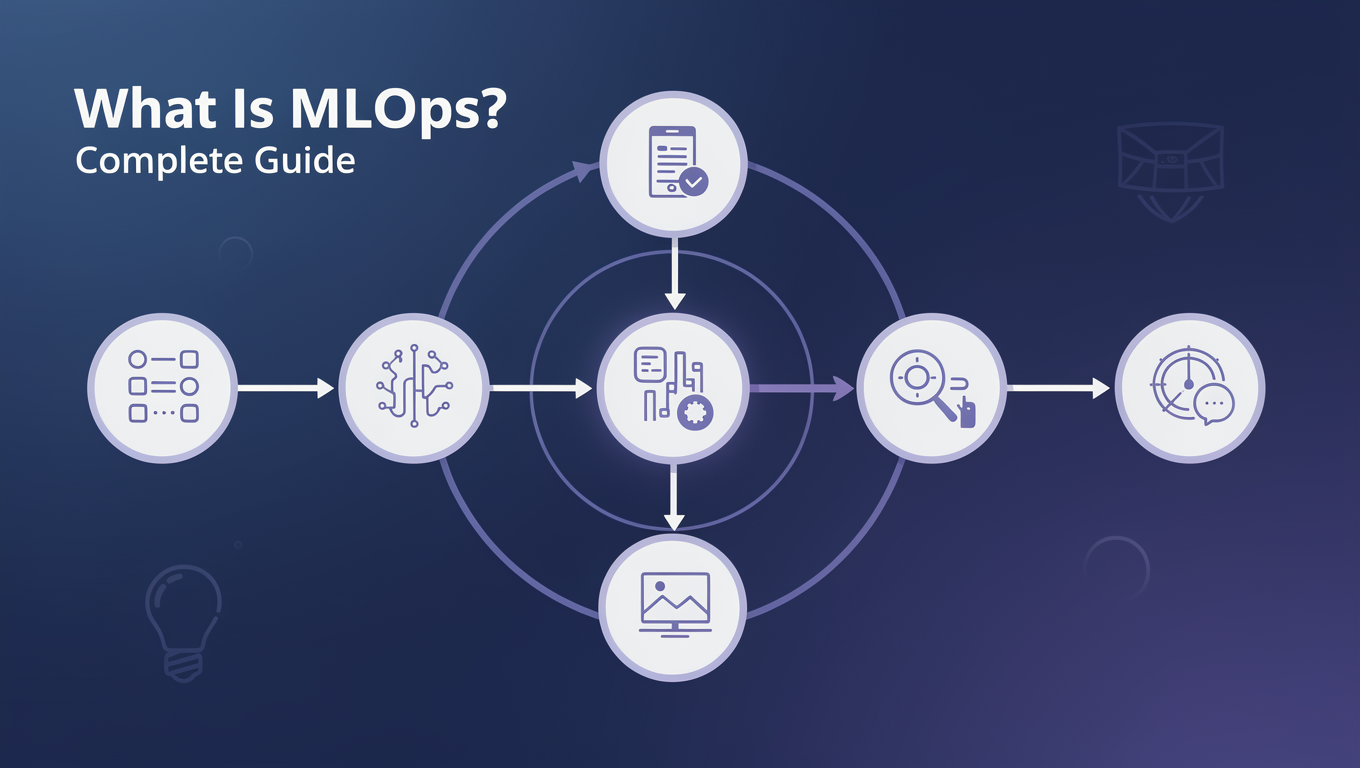

The MLOps Lifecycle

Development Phase

During development, data scientists and ML engineers collaborate to build and validate models. MLOps practices ensure this work is reproducible and trackable. Key activities include:

- Data exploration and feature engineering

- Model training and hyperparameter tuning

- Experiment tracking and comparison

- Model validation and testing

Deployment Phase

Moving models from development to production requires careful orchestration. MLOps provides frameworks for:

- Model packaging and containerisation

- Automated deployment pipelines

- A/B testing and canary releases

- Infrastructure provisioning and scaling

Operations Phase

Once deployed, models require ongoing maintenance and monitoring. This includes:

- Performance monitoring and alerting

- Data drift detection

- Model retraining and updates

- Incident response and rollback procedures

MLOps vs DevOps: Key Differences

While MLOps builds on DevOps principles, machine learning introduces unique complexities:

Data as a first-class citizen: Unlike traditional software, ML systems depend heavily on data quality and availability. MLOps must account for data versioning, validation, and pipeline management.

Model performance degradation: Software bugs are typically deterministic and reproducible. ML models can silently degrade as real-world conditions change, requiring continuous monitoring and retraining.

Experimental nature: ML development involves extensive experimentation with different algorithms, features, and hyperparameters. MLOps must support this iterative process while maintaining reproducibility.

Regulatory requirements: Many ML applications face strict regulatory requirements around model explainability, bias detection, and audit trails.

If you’re already familiar with DevOps practices, you’ll find many concepts transfer well to MLOps. Our comprehensive guide to DevOps courses covers the foundational practices that underpin effective MLOps implementation.

Essential MLOps Tools and Technologies

Orchestration Platforms

Tools like Apache Airflow, Kubeflow, or MLflow orchestrate complex ML workflows, managing dependencies between data processing, training, and deployment steps.

Model Serving Platforms

Platforms such as TensorFlow Serving, Seldon, or cloud-native solutions like AWS SageMaker handle model deployment, scaling, and serving predictions to applications.

Monitoring and Observability

Specialised ML monitoring tools like Evidently AI, Whylabs, or built-in cloud monitoring services track model performance, data drift, and system health.

Feature Stores

Feature stores like Feast or cloud-managed solutions provide centralised repositories for ML features, ensuring consistency between training and serving environments.

Building MLOps Capabilities

Implementing MLOps requires a combination of technical skills, cultural changes, and tool adoption. Organisations typically start with basic practices like experiment tracking and automated testing before progressing to more sophisticated capabilities like automated retraining and advanced monitoring.

The key is starting small and building incrementally. Begin with version control for your ML code and data, then add automated testing and deployment pipelines. As your practices mature, introduce more advanced monitoring and automated retraining capabilities.

For teams looking to build these capabilities, AIU.ac’s curated course collection includes comprehensive MLOps training from leading providers. Our platform brings together over 6,000 courses from Pluralsight, 140+ from Educative, and content from other top providers, making it easy to find the right training for your team’s needs.

MLOps Career Paths and Skills

MLOps creates new career opportunities at the intersection of data science, software engineering, and operations. Key roles include:

MLOps Engineers: Focus on building and maintaining ML infrastructure, deployment pipelines, and monitoring systems. They need strong software engineering skills plus understanding of ML workflows.

ML Platform Engineers: Design and build internal platforms that enable data scientists and ML engineers to work more effectively. This role requires deep knowledge of distributed systems and cloud platforms.

ML Infrastructure Architects: Define the overall technical strategy for ML systems, including tool selection, architecture decisions, and governance frameworks.

Essential skills for MLOps roles include:

- Software engineering fundamentals (Python, Git, testing)

- Cloud platforms and containerisation (Docker, Kubernetes)

- CI/CD tools and practices

- ML frameworks and model serving technologies

- Monitoring and observability tools

- Data engineering and pipeline management

Common MLOps Challenges

Organisational Alignment

MLOps requires collaboration between data science, engineering, and operations teams. Different priorities, tools, and working styles can create friction. Success requires clear communication, shared goals, and often new organisational structures.

Tool Complexity

The MLOps ecosystem includes hundreds of tools, each solving specific problems. Choosing the right combination and avoiding tool sprawl requires careful evaluation and standardisation efforts.

Data Management

ML systems depend on high-quality, accessible data. Poor data governance, inconsistent formats, and data silos can undermine even the best MLOps practices.

Scaling Challenges

What works for a single model might not scale to dozens or hundreds of models. MLOps practices must evolve as organisations mature their ML capabilities.

Getting Started with MLOps

For individuals looking to enter the MLOps field, start by building foundational skills in both machine learning and software engineering. Understanding how ML models work is crucial, but equally important is knowing how to build reliable, scalable systems.

Practical experience is invaluable. Start with personal projects that involve deploying ML models, even simple ones. Practice using tools like Docker, Git, and cloud platforms. Build end-to-end pipelines that include data processing, model training, and deployment.

Consider structured learning through professional courses that cover MLOps practices. Look for content that combines theoretical knowledge with hands-on experience using industry-standard tools.

The Future of MLOps

MLOps continues evolving as organisations scale their ML operations. Emerging trends include:

AutoML integration: Automated machine learning tools are becoming part of MLOps pipelines, reducing the manual effort required for model development and tuning.

Edge deployment: As more ML models run on edge devices, MLOps practices are adapting to handle distributed deployments and offline scenarios.

Responsible AI: Growing focus on fairness, explainability, and bias detection is driving new MLOps tools and practices around model governance.

Real-time ML: Demand for real-time predictions is pushing MLOps towards streaming architectures and low-latency serving solutions.

Frequently Asked Questions

How difficult is MLOps?

MLOps difficulty varies significantly based on your background and the complexity of your use case. If you have software engineering experience, many concepts will feel familiar since MLOps builds on established DevOps practices. The main challenges are understanding ML-specific requirements like data drift monitoring and model versioning. For those new to both ML and operations, expect a steeper learning curve, but the field is very learnable with structured practice. Start with simple projects and gradually increase complexity as your skills develop.

Do I need a machine learning background to work in MLOps?

While deep ML expertise isn’t always required, understanding how ML models work is essential for effective MLOps. You need to know enough about model training, evaluation, and common failure modes to build appropriate infrastructure and monitoring. Many successful MLOps engineers come from software engineering or DevOps backgrounds and learn ML concepts on the job. The key is being comfortable with both technical systems and the unique challenges that ML introduces.

What’s the difference between MLOps and DataOps?

DataOps focuses on improving data pipeline reliability, quality, and delivery speed across the organisation. It’s about getting clean, reliable data to anyone who needs it. MLOps specifically addresses the challenges of deploying and maintaining machine learning models in production. While there’s overlap (both need good data pipelines), MLOps includes model-specific concerns like performance monitoring, A/B testing, and automated retraining. Many organisations implement both practices as complementary approaches.

Which cloud platform is best for MLOps?

All major cloud providers (AWS, Google Cloud, Azure) offer comprehensive MLOps capabilities, and the best choice depends on your existing infrastructure and specific requirements. AWS provides mature services like SageMaker for end-to-end ML workflows. Google Cloud excels in AI/ML innovation with tools like Vertex AI. Azure integrates well with Microsoft’s ecosystem and offers strong enterprise features. Consider factors like existing cloud relationships, team expertise, specific tool requirements, and cost when making your decision.

How long does it take to implement MLOps in an organisation?

MLOps implementation timelines vary dramatically based on organisational maturity, existing infrastructure, and scope. Basic practices like experiment tracking and automated testing can be implemented in weeks. Building comprehensive MLOps capabilities including automated deployment, monitoring, and governance typically takes 6-18 months. The key is starting with high-impact, low-complexity improvements and building incrementally. Don’t try to implement everything at once—focus on solving your most pressing problems first, then expand your capabilities over time.