Handling Batch Data with Apache Spark on Databricks

Data pipelines at scale demand Spark expertise—and Databricks makes it accessible. This course cuts through the complexity, teaching you how to process massive batch datasets efficiently without the typical learning curve. You’ll move from theory to production-ready patterns in under 2.5 hours.

AIU.ac Verdict: Ideal for data engineers and analysts stepping into distributed computing or optimising existing Spark workflows. The hands-on Databricks environment is a genuine advantage, though you’ll need foundational SQL and Python familiarity to keep pace.

What This Course Covers

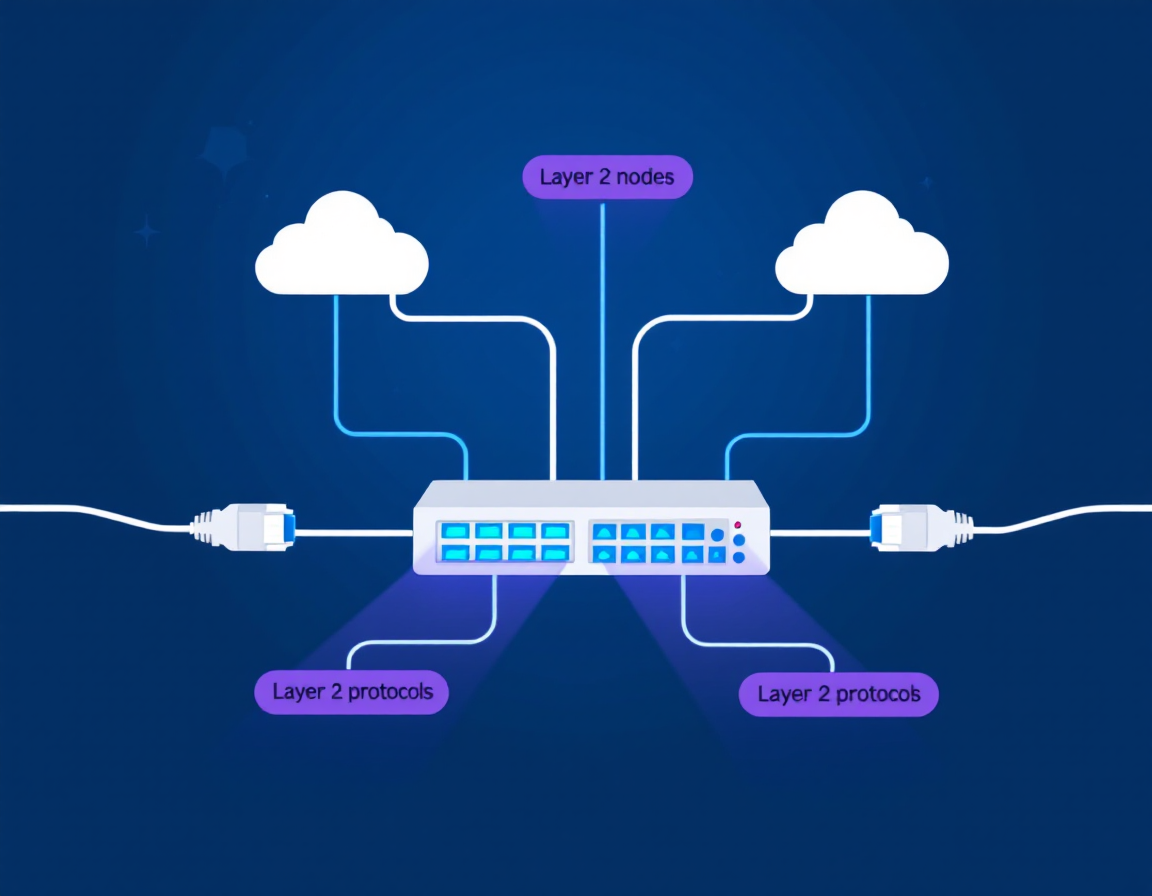

The course covers Spark’s architecture and RDD/DataFrame abstractions, then dives into practical batch job design: reading diverse data sources, transformations, aggregations, and writing results back to storage. You’ll work through real partitioning strategies, caching patterns, and performance tuning—the exact decisions you’ll face in production.

Expect concrete Databricks workflows: setting up clusters, monitoring job execution, and debugging common bottlenecks. Janani Ravi’s instruction emphasises why each pattern matters, not just the syntax. By the end, you’ll confidently build batch pipelines that scale without becoming a Spark internals expert.

Who Is This Course For?

Ideal for:

- Data engineers building ETL pipelines: Direct application to production workflows; cluster management and optimisation translate immediately.

- Analytics engineers moving to distributed systems: Bridge from SQL/Python to Spark; Databricks environment mirrors real team setups.

- Data analysts handling growing dataset volumes: Learn when and how to scale beyond single-machine tools without deep infrastructure knowledge.

May not suit:

- Complete programming beginners: Assumes comfort with Python and SQL; no time spent on syntax fundamentals.

- Streaming-focused engineers: Batch-only scope; Spark Streaming and real-time patterns aren’t covered.

Frequently Asked Questions

How long does Handling Batch Data with Apache Spark on Databricks take?

2 hours 21 minutes of video content. Most learners complete it in one or two sittings, though hands-on practice in the Databricks sandbox may extend that.

Do I need Spark experience before starting?

No. The course assumes Python and SQL basics but teaches Spark from first principles. If you’ve never used Spark, you’re the target audience.

Will I have access to a Databricks environment to practise?

Yes. Pluralsight includes sandboxed hands-on labs, so you can run code without setting up your own cluster.

Is this course enough to handle production batch jobs?

It covers the core patterns and decision-making framework. You’ll be confident building and optimising pipelines, though real-world complexity (schema evolution, error handling at scale) typically demands additional on-the-job experience.

Course by Janani Ravi on Pluralsight. Duration: 2h 21m. Last verified by AIU.ac: March 2026.